ChatGPT can answer multiple-choice questions, here’s how

Table of Contents

While many generative AI solutions are available now, OpenAI’s ChatGPT stands tall as a frontrunner. Its prowess in understanding and generating human-like text seems far ahead of several other solutions. But among all its capabilities, understanding how it can help in assessment-based formats, like answering multiple-choice questions, is a fundamental step in learning how to get the most out of the chatbot. Multiple-choice questions are a standard assessment format, that can be easily answered with the help of ChatGPT. Let’s find out more.

Quick Answer

ChatGPT is able to answer multiple-choice questions through its extensive training on a vast range of textual data. Additionally, with the added GPT-4 feature of accessing the internet, ChatGPT Plus users can now have up-to-date, detailed questions answered.

ChatGPT can answer Multiple-choice questions

ChatGPT is adept at comprehending the context and nuances of students' learning styles and assessment formats. As a result, it can provide accurate and contextually relevant right answers. By leveraging its extensive training on diverse textual data, ChatGPT can analyze the given options and select the most suitable response based on the information provided. This capability, thanks to the abilities of large language models like GPT-4, makes ChatGPT a versatile tool for educational purposes, test preparation, and information retrieval. It performs especially well on true/false questions.

Prime Day is finally here! Find all the biggest tech and PC deals below.

- Sapphire 11348-03-20G Pulse AMD Radeon™ RX 9070 XT Was $779 Now $739

- AMD Ryzen 7 7800X3D 8-Core, 16-Thread Desktop Processor Was $449 Now $341

- ASUS RTX™ 5060 OC Edition Graphics Card Was $379 Now $339

- LG 77-Inch Class OLED evo AI 4K C5 Series Smart TV Was $3,696 Now $2,796

- Intel® Core™ i7-14700K New Gaming Desktop Was $320.99 Now $274

- Lexar 2TB NM1090 w/HeatSink SSD PCIe Gen5x4 NVMe M.2 Was $281.97 Now $214.98

- Apple Watch Series 10 GPS + Cellular 42mm case Smartwatch Was $499.99 Now $379.99

- ASUS ROG Strix G16 (2025) 16" FHD, RTX 5060 gaming laptop Was $1,499.99 Now $1,274.99

- Apple iPad mini (A17 Pro): Apple Intelligence Was $499.99 Now $379.99

*Prices and savings subject to change. Click through to get the current prices.

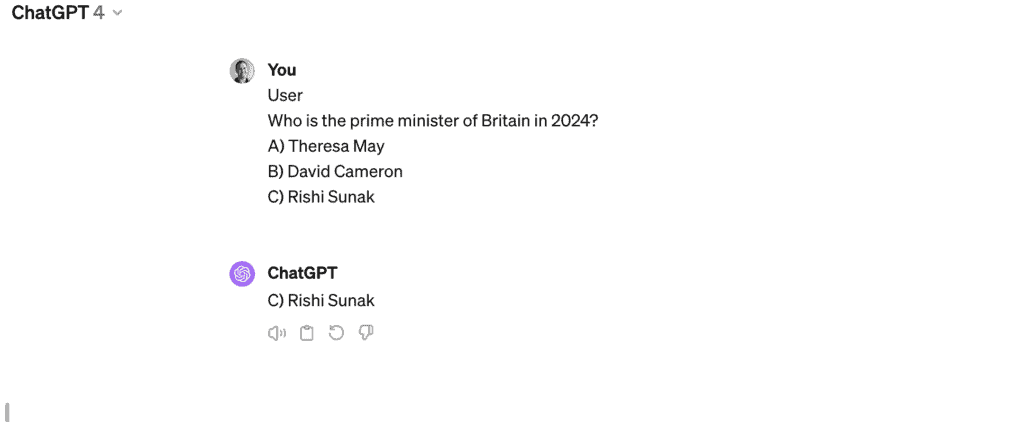

Whether you need to fill out a form quickly or you’d like some assistance with your studies, ChatGPT can help . However, it is important to note that there are some limitations when using this feature. The free version of ChatGPT, which uses the GPT-3.5 model, is trained in a diverse range of internet text, but only up until September 2021. This includes websites, books, articles, and other text sources to develop a broad understanding of human language. This means that while the free version of ChatGPT can give possible answers to multiple-choice quiz questions, you cannot rely on its accuracy, especially when it comes to questions on data past September 2021. if you ask the GPT-3.5 model of ChatGPT to answer a multiple-choice question about the current Prime Minister of France or similar questions, it will likely give an inaccurate answer.

However, by setting up a paid subscription to ChatGPT Plus, which utilizes the GPT-4 model, users will be able to access the internet via the chatbot meaning that the information it retrieves to answer the question will be up-to-date. Therefore, if you’re hoping to use ChatGPT to answer multiple-choice questions that require detailed information from recent years then joining ChatGPT Plus may be the option for you.

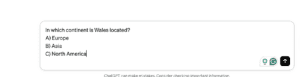

The images below demonstrate how the GPT-4 model (ChatGPT Plus/Team/Enterprise) and the GPT-3.5 model (Free version of ChatGPT) respond to a Multiple-choice question that requires recent information found on the internet

How to use ChatGPT to answer multiple-choice questions

There are two ways to input a multiple-choice question into ChatGPT. The first option involves writing out the question in the textbox, and the second involves using the file-inputting feature to upload the document (only available in the GPT-4 model).

Write out the question in the text box

This option is available in both GPT-3.5 and GPT-4 models.

Step

Open ChatGPT

Open ChatGPT in your web browser or mobile app. Then login or use the account-free version of the platform.

Step

Input multiple-choice question in text box.

Input the question in the text box either by copying and pasting or typing it out.

Ensure that the question is in a clear format that ChatGPT will be able to understand.

Step

Select the arrow button

Select the arrow button on the right side of the text box to send the question to the chatbot.

It should take a few seconds for the chatbot to process, then it will respond with the correct answer.

Using the file inputting feature

Say you just want to copy a document that has a number of multiple-choice questions on it into ChatGPT to answer, well with the paperclip icon, you can.

This method requires GPT-4 features only found in ChatGPT Plus

Step

Open ChatGPT

Open ChatGPT in your web browser or mobile app. Then sign into your account.

Step

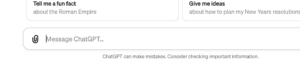

Locate the paperclip icon

Locate and select the paperclip icon in the bottom left corner of the text box.

Step

Select the file

Once the paperclip icon is selected your devices files should pop up. From there you can navigate through your documents to find the correct file and input it.

Step

Add prompt

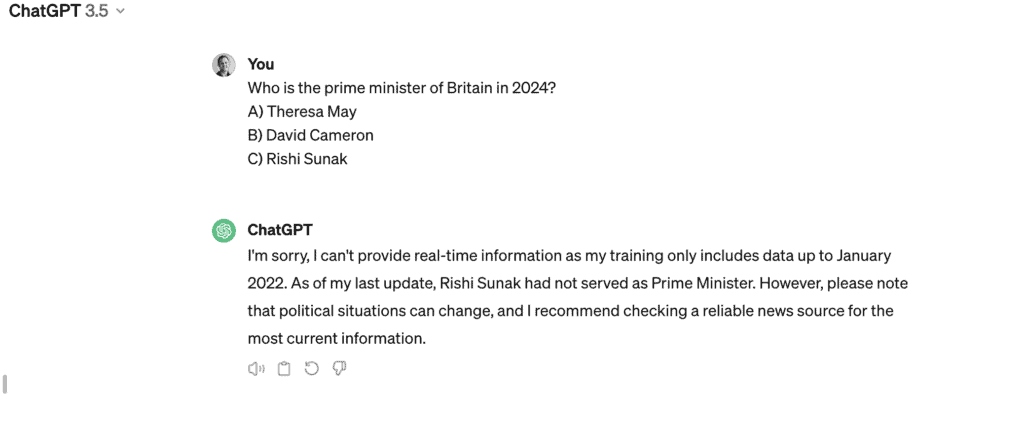

Once the file has been selected it should appear at the bottom of the text box. From there you can add a prompt related to the file, such as “Answer this question”.

Step

Send the file

From there you can send the file and ChatGPT should reply within a number of seconds.

How good is ChatGPT at answering Multiple-choice questions?

In an article published in JAMA Internal Medicine, a team of researchers found that the AI model on average scored more than four points higher than students on multiple choice formats. Co-author Eric Strong, a hospitalist and clinical associate professor at Stanford School of Medicine, says:

“We were very surprised at how well ChatGPT did on these kinds of free-response medical reasoning questions by exceeding the scores of the human test-takers,”

Eric Strong

Co-author Alicia DiGiammarino expanded:

“with these kinds of results, we’re seeing the nature of teaching and testing medical reasoning through written text being upended by new tools.”

Alicia DiGiammarino

Nonetheless, there may still be tell-tale signs an examination has been completed by AI. Although with multiple-choice answers it is hard to distinguish chatbots from humans, questions that require more creativity and greater analytical insight are usually better answered by humans. As studies by James Fern at the University of Bath show, chatbots can make nonsensical errors and often struggle with getting a real-looking reference in paragraph-long answers or mathematical processes. ChatGPT's score is systematically lower than humans in this respect.

If you’d like to find out more about how work completed with AI can be detected by Universities, check out our comprehensive guide here.

Essential AI Tools

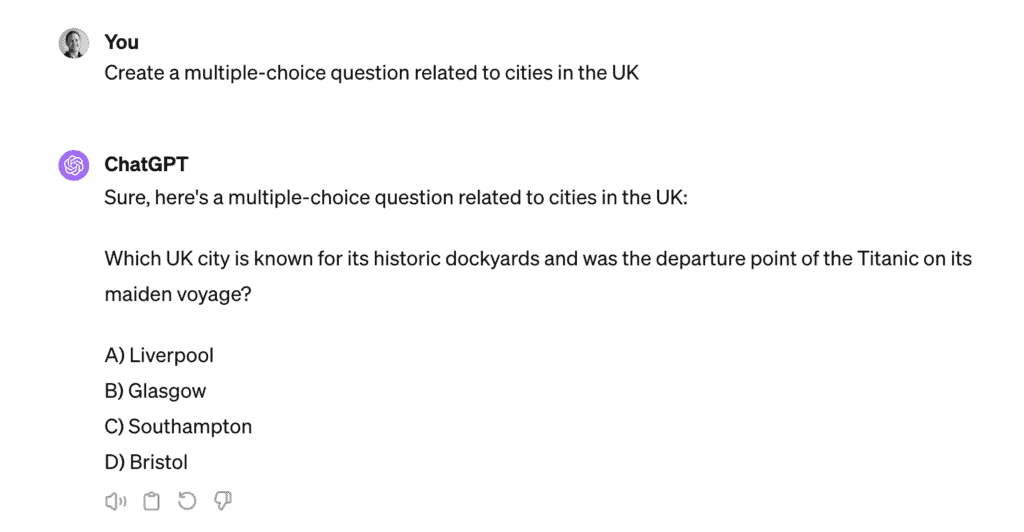

Can ChatGPT generate multiple-choice questions?

The capabilities of ChatGPT extend beyond mere question-answering. It can also contribute to creating multiple-choice questions, demonstrating the potential of artificial intelligence to assist educators, content creators, and researchers.

By generating well-structured multiple-choice questions, ChatGPT becomes a valuable asset in curriculum development, assessment design, and the creation of engaging learning materials. The AI chatbot’s ability to mimic human-like complex questions, while considering various levels of complexity, adds an extra dimension to its utility. Multiple question types can be created. This provides another means of testing real-life students’ abilities through the creation of multiple-choice tests, without laborious work from teachers and professors. This is simple thanks to its fast access and processing of an entire corpus of internet content and could revolutionize the teaching process.

After sending this prompt, ChatGPT was able to generate this question within a number of seconds. This an extremely helpful feature of ChatGPT and could come in handy when it’s your turn to write the next questions for quiz night.

Other AI tools that can generate Multiple-choice questions

Aside from ChatGPT, there are a number of dedicated AI tools out there that are able to generate multiple-choice questions and more. See the list below for some alternate options when trying to create such question formats.

Final thoughts

Online chatbots are the fascination of the entire internet. ChatGPT’s influence cannot be overstated, but it won’t constitute the wholesale replacement of doctors. They’re great for open-ended questions (free-response questions) with original answers – things that calculators and Google can’t solve. However, case-based questions involving a particular case study or clinical reasoning cases are best left to medical professionals.

In that case, for now, it’s fun to see how ChatGPT’s clinical practice answers compare to those of students, but it also poses worries about the future test-taking integrity of tomorrow’s doctors.